Video Conferencing in HTML5: WebRTC via Web Sockets

A bit over a week ago I gave a presentation at Web Directions Code 2012 in Melbourne. Maxine and John asked me to speak about something related to HTML5 video, so I went for the new shiny: WebRTC - real-time communication in the browser.

I only had 20 min, so I had to make it tight. I wanted to show off video conferencing without special plugins in Google Chrome in just a few lines of code, as is the promise of WebRTC. To a large extent, I achieved this. But I made some interesting discoveries along the way. Demos are in the slide deck.

UPDATE: Opera 12 has been released with WebRTC support.

Housekeeping: if you want to replicate what I have done, you need to install a Google Chrome Web Browser 19+. Then make sure you go to chrome://flags and activate the MediaStream and PeerConnection experiment(s). Restart your browser and now you can experiment with this feature. Big warning up-front: it’s not production-ready, since there are still changes happening to the spec and there is no compatible implementation by another browser yet.

Here is a brief summary of the steps involved to set up video conferencing in your browser:

- Set up a video element each for the local and the remote video stream.

- Grab the local camera and stream it to the first video element.

- (*) Establish a connection to another person running the same Web page.

- Send the local camera stream on that peer connection.

- Accept the remote camera stream into the second video element.

Now, the most difficult part of all of this - believe it or not - is the signalling part that is required to build the peer connection (marked with (*)). Initially I wanted to run completely without a server and just enter the remote’s IP address to establish the connection. This is, however, not a functionality that the PeerConnection object provides [might this be something to add to the spec?].

So, you need a server known to both parties that can provide for the handshake to set up the connection. All the examples that I have seen, such as https://apprtc.appspot.com/, use a channel management server on Google’s appengine. I wanted it all working with HTML5 technology, so I decided to use a Web Socket server instead.

I implemented my Web Socket server using node.js (code of websocket server). The video conferencing demo is in the slide deck in an iframe - you can also use the stand-alone html page. Works like a treat.

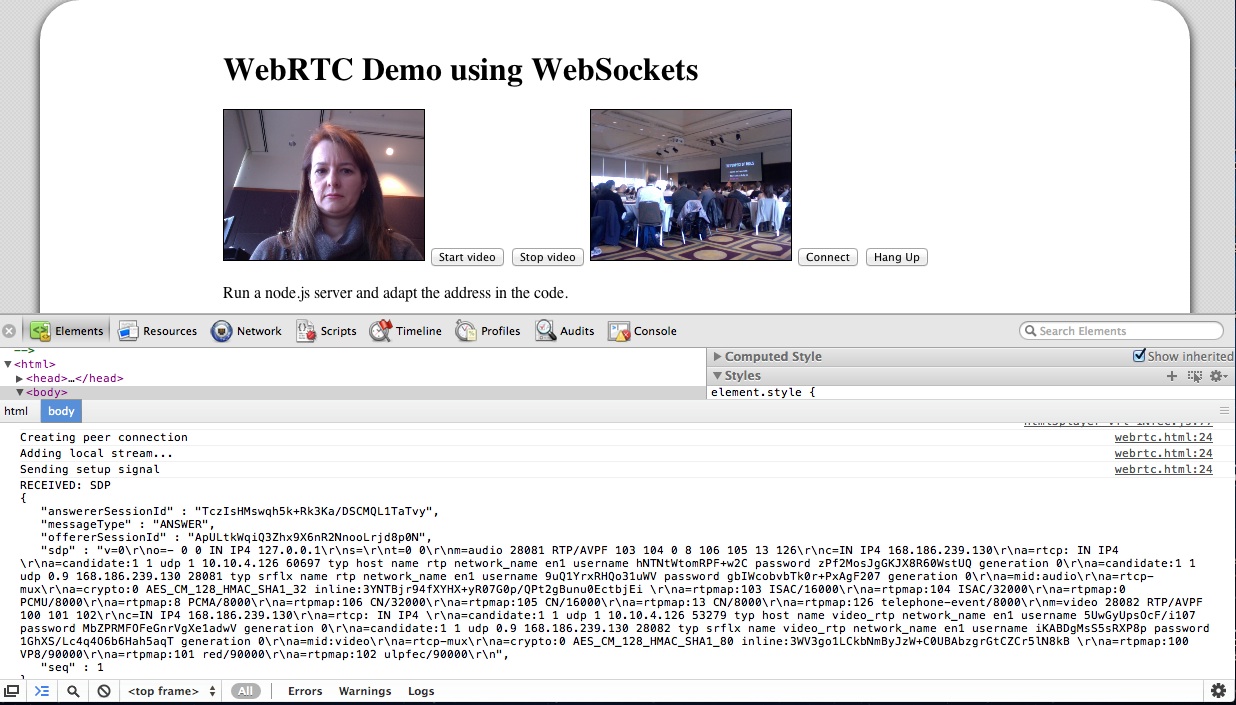

While it is still using Google’s STUN server to get through NAT, the messaging for setting up the connection is running completely through the Web Socket server. The messages that get exchanged are plain SDP message packets with a session ID. There are OFFER, ANSWER, and OK packets exchanged for each streaming direction. You can see some of it in the below image:

I’m not running a public WebSocket server, so you won’t be able to see this part of the presentation working. But the local loopback video should work.

At the conference, it all went without a hitch (while the wireless played along). I believe you have to host the WebSocket server on the same machine as the Web page, otherwise it won’t work for security reasons.

A whole new world of opportunities lies out there when we get the ability to set up video conferencing on every Web page - scary and exciting at the same time!