WebVTT Discussions at FOMS

At the recent FOMS (Foundations of Open Media Software and Standards) Developer Workshop, we had a massive focus on WebVTT and the state of its feature set. You will find links to summaries of the individual discussions in the FOMS Schedule page. Here are some of the key results I went away with.

1. WebVTT Regions

The key driving force for improvements to WebVTT continues to be the accurate representation of CEA608/708 captioning. As part of that drive, we’ve introduced regions (the CEA708 “window” concept) to WebVTT. WebVTT regions satisfy multiple requirements of CEA608/708 captions:

- support for rollup captions

- support for background color and border color on a group of cues independent of the background color of the individual cue

- possibility to move a group of cues from one location on screen to a different

- support to specify an anchor point and a growth direction for cues when their text size changes

- support for specifying a fixed number of lines to be rendered

- possibility to specify which region is rendered in front of which other one when regions overlap

While WebVTT regions enable us to satisfy all of the above points, the specification isn’t actually complete yet and some of the above needs aren’t satisfied yet.

We have an open bug to move a region elsewhere. A first discussion at FOMS seemed to to indicate that we’ll have to add syntax for updating a region at a particular time and thus give region definitions a way to be valid only for a certain time frame. I can imagine that the region definitions that we have in the header of the WebVTT file now would have an implicitly defined time frame from the start to the end of the file, but can be overruled by a re-definition anywhere within the WebVTT file. That redefinition needs to provide a start and end time.

We registered a bug to add specifying the width and height of regions (and possibly of cues) by em (i.e. by multiples of the largest character in a font). This should allow us to have the region grow/shrink around the region anchor point with a change of font size by script or a user. em specifications should also be applied to cues - that matches the column count of CEA708/608 better.

When regions overlap, the original region extension spec already suggested a “layer” cue setting. It will be easy to add it.

Another change that we will ultimately need is the “scroll” setting: we will need to introduce support for scrolling text down or from left-to-right or right-to-left, e.g. vertical scrolling text seems to be used in some Chinese caption use cases.

2. Unify Rendering Approach

The introduction of regions created a second code path in the rendering spec with some duplication. At FOMS we discussed if it was possible to unify that. The suggestion is to render all cues into a region. Those that are not part of a region would be rendered into an anonymous region that covers the complete viewport. There may be some consequences to this, e.g. cue settings should be usable across all cues, no matter whether or not part of a region, and avoiding cue overlap may need to be done within regions.

Here’s a rough outline of the path of the new rendering algorithm:

(1) Render the regions:

| Specified Region | Anonymous Region |

|---|---|

| Render values as given: | Render following values: |

| - width - lines - regionanchor - viewportanchor - scroll | - 100% - videoheight/lineheight - 0,0 - 0,0 - none |

(2) Render the cues:

- Create a cue box and put it in its region (anonymous if none given).

- Calculate position & size of cue box from cue settings (position, line, size).

- Calculate position of cue text inside cue box from remaining cue settings (vertical, align).

3. Vertical Features

WebVTT includes vertical rendering, both right-to-left and left-to-right. However, regions are not defined for vertical. Eventually, we’re going to have to look at the vertical features of WebVTT with more details and figure out whether the spec is working for them and what real-world requirements we have missed. We hope we can get some help from users in countries where vertically rendered captions/subtitles are the norm.

4. Best Practices

Some of he WebVTT users at FOMS suggested it would be advantageous to start a list of “best practices” for how to author captions with WebVTT. Example recommendations are:

- Use line numbers only to position cues from top or bottom of viewport. Don’t use otherwise.

- Note that when the user increases the fontsize in rollup captions and thus introduces new line breaks, your cues will roll by faster because the number of lines of a rollup is fixed.

- Make sure to use and UTF-8 markers to control the directionality of your text.

It would be nice if somebody started such a document.

5. Non-caption use cases

Instead of continuing to look back and improve our support of captions/subtitles in WebVTT, one session at FOMS also went ahead and looked forward to other use cases. The following requirements came out of this:

5.1 Preview Thumbnails

A common use case for timed data is the use of preview thumbnails on the navigation bar of videos. A native implementation of preview thumbnails would allow crawlers and search engines to have a standardised way of extracting timed images for media files, so introduction of a new @kind value “thumbnails” was suggested.

The content of a “thumbnails” cue could be any of:

- an image URL

- a sprite URL to a single image

- a spatial & temporal media fragment URL to a media resource

- base64 encoded image (data URI)

- an iframe offset to the media resource

The suggestion is to allow anything that would work in a img @src attribute as value in a cue of @kind=“thumbnails”. Responsive images might also be useful for a track of @kind=“thumbnails”. It may even be possible to define an inband thumbnail track based on the track of @kind=“thumbnails”. Such cues should also work in the JavaScript track API.

5.2 Chapter markers

There is interest to put richer content than just a chapter title into chapter cues. Often, chapters consist of a title, text and and image. The text is not so important, but the image is used almost everywhere that chapters are used. There may be a need to extend chapter cue content with images, similar to what a @kind=“thumbnails” track offers.

The conclusion that we arrived at was that we need to make @kind=“thumbnails” work first and then look at using the learnings from that to extend @kind=“chapters”.

5.3 Inband tracks for live video

A difficult topic was opened with the question of how to transport text tracks in live video. In live captioning, end times are never created for cues, but are implied by the start time of the next cue. This is a use case that hasn’t been addressed in HTML5/WebVTT yet. An old proposal to allow a special end time value of “NEXT” was discussed and recommended for adoption. Also, there was support for the spec change that stops blocking loading VTT until all cues have been loaded.

5.4 Cross-domain VTT loading

A brief discussion centered around the fact that the spec disallows cross-domain loading of WebVTT files, but that no browser implements this. This needs to be discussion at the HTML WG level.

6. Regions in live captioning

The final topic that we discussed was how we could provide support for regions in live captioning.

- The currently active region definitions will need to be come part of every header of every VTT file segment that HLS uses, so it’s available in case the cues in the segment file reference it.

- “NEXT” in end time markers would make authoring of live captioned VTT files easier.

- If the application wants to use 1 word at a time and doesn’t want to delay sending the word until the full cue is authored (e.g. in a Hangout type environment), we will need to introduce the concept of “cue continuation markers”, so we know that a cue could be extended with the next VTT file fragment.

This is an extensive and impressive amount of discussion around WebVTT and a lot of new work to be performed in the future. I’m very grateful for all the people who have contributed to these discussions at FOMS and will hopefully continue to help get the specifications right.

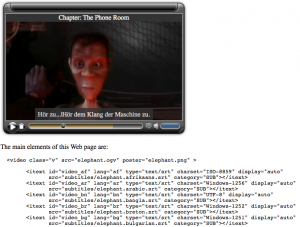

Screenshot of first itext video player experiment

Screenshot of first itext video player experiment