adaptive HTTP streaming for open codecs

At this week’s FOMS in New York we had one over-arching topic that seemed to be of interest to every single participant: how to do adaptive bitrate streaming over HTTP for open codecs. On the first day, there was a general discussion about the advantages and disadvantages of adaptive HTTP streaming, while on the second day, we moved towards designing a solution for Ogg and WebM. While I didn’t attend all the discussions, I want to summarize the insights that I took out of the days in this blog post and the alternative implementation strategies that were came up with.

Use Cases for Adaptive HTTP Streaming

Streaming using RTP/RTSP has in the past been the main protocol to provide live video streams, either for broadcast or for real-time communication. It has been purpose-built for chunked video delivery and has features that many customers want, such as the ability to encrypt the stream, to tell players not to store the data, and to monitor the performance of the stream such that its bandwidth can be adapted. It has, however, also many disadvantages, not least that it goes over ports that normal firewalls block and thus is rather difficult to deploy, but also that it requires special server software, a client that speaks the protocol, and has a signalling overhead on the transport layer for adapting the stream.

RTP/RTSP has been invented to allow for high quality of service video consumption. In the last 10 years, however, it has become the norm to consume “canned” video (i.e. non-live video) over HTTP, making use of the byte-range request functionality of HTTP for seeking. While methods have been created to estimate the size of a pre-buffer before starting to play back in order to achieve continuous playback based on the bandwidth of your pipe at the beginning of downloading, not much can be done when one runs out of pre-buffer in the middle of playback or when the CPU on the machine doesn’t manage to catch up with decoding of the sheer amount of video data: your playback stops to go into re-buffering in the first case and starts to become choppy in the latter case.

An obvious approach to improving this situation is the scale the bandwidth of the video stream down, potentially even switch to a lower resolution video, right in the middle of playback. Apple’s HTTP live streaming, Microsoft’s Smooth Streaming, and Adobe’s Dynamic Streaming are all solutions in this space. Also, ISO/MPEG is working on DASH (Dynamic Adaptive Streaming over HTTP) is an effort to standardize the approach for MPEG media. No solution yets exist for the open formats within Ogg or WebM containers.

Some features of HTTP adaptive streaming are:

- Enables adaptation of downloading to avoid continuing buffering when network or machine cannot cope.

- Gapless switching between streams of different bitrate.

- No special server software is required - any existing Web Server can be used to provide the streams.

- The adaptation comes from the media player that actually knows what quality the user experiences rather than the network layer that knows nothing about the performance of the computer, and can only tell about the performance of the network.

- Adaptation means that several versions of different bandwidth are made available on the server and the client switches between them based on knowledge it has about the video quality that the user experiences.

- Bandwidth is not wasted by downloading video data that is not being consumed by the user, but rather content is pulled moments just before it is required, which works both for the live and canned content case and is particularly useful for long-form content.

Viability

In discussions at FOMS it was determined that mid-stream switching between different bitrate encoded audio files is possible. Just looking at the PCM domain, it requires stitching the waveform together at the switch-over point, but that is not a complex function. To be able to do that stitching with Vorbis-encoded files, there is no need for a overlap of data, because the encoded samples of the previous window in a different bitrate page can be used as input into the decoding of the current bitrate page, as long as the resulting PCM samples are stitched.

For video, mid-stream switching to a different bitrate encoded stream is also acceptable, as long as the switch-over point adheres to a keyframe, which can be independently decoded.

Thus, the preparation of the alternative bitstream videos requires temporal synchronisation of keyframes on video - the audio can deal with the switch-over at any point. A bit of intelligent encoding is thus necessary - requiring the encoding pipeline to provide regular keyframes at a certain rate would be sufficient. Then, the switch-over points are the keyframes.

Technical Realisation

With the solutions from Adobe, Microsoft and Apple, the technology has been created such there are special tools on the server that prepare the content for adaptive HTTP streaming and provide a manifest of the prepared content. Typically, the content is encoded in versions of different bitrates and the bandwidth versions are broken into chunks that can be decoded independently. These chunks are synchronised between the different bitrate versions such that there are defined switch-over points. The switch-over points as well as the file names of the different chunks are documented inside a manifest file. It is this manifest file that the player downloads instead of the resource at the beginning of streaming. This manifest file informs the player of the available resources and enables it to orchestrate the correct URL requests to the server as it progresses through the resource.

At FOMS, we took a step back from this approach and analysed what the general possibilities are for solving adaptive HTTP streaming. For example, it would be possible to not chunk the original media data, but instead perform range requests on the different bitrate versions of the resource. The following options were identified.

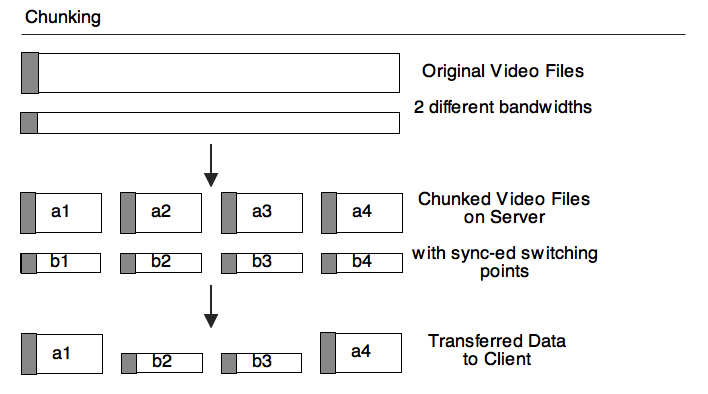

Chunking

With Chunking, the original bitrate versions are chunked into smaller full resources with defined switch-over points. This implies creation of a header on each one of the chunks and thus introduces overhead. Assuming we use 10sec chunks and 6kBytes per chunk, that results in 5kBit/sec extra overhead. After chunking the files this way, we provide a manifest file (similar to Apple’s m3u8 file, or the SMIL-based manifest file of Microsoft, or Adobe’s Flash Media Manifest file). The manifest file informs the client about the chunks and the switch-over points and the client requests those different resources at the switch-over points.

Disadvantages:

- Header overhead on the pipe.

- Switch-over delay for decoding the header.

- Possible problem with TCP slowstart on new files.

- A piece of software is necessary on server to prepare the chunked files.

- A large amount of files to manage on the server.

- The client has to hide the switching between full resources.

Advantages:

- Works for live streams, where increasing amounts of chunks are written.

- Works well with CDNs, because mid-stream switching to another server is easy.

- Chunks can be encoded such that there is no overlap in the data necessary on switch-over.

- May work well with Web sockets.

- Follows the way in which proprietary solutions are doing it, so may be easy to adopt.

- If the chunks are concatenated on the client, you get chained Ogg files (similar concept in WebM?), which are planned to be supported by Web browsers and are thus legal files.

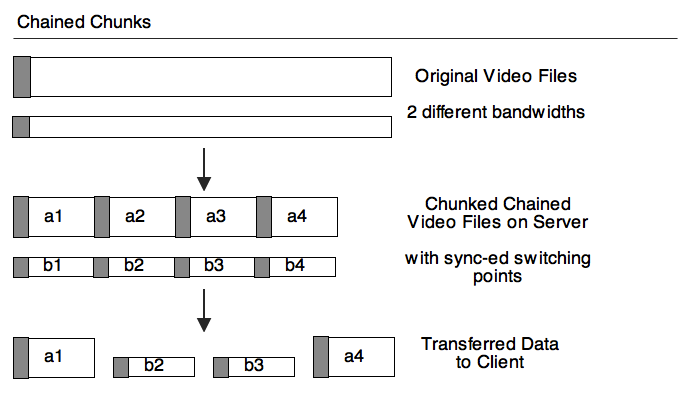

Chained Chunks

Alternatively to creating the large number of files, one could also just create the chained files. Then, the switch-over is not between different files, but between different byte ranges. The headers still have to be read and parsed. And a manifest file still has to exist, but it now points to byte ranges rather than different resources.

Advantages over Chunking:

- No TCP-slowstart problem.

- No large number of files on the server.

Disadvantages over Chunking:

- Mid-stream switching to other servers is not easily possible - CDNs won’t like it.

- Doesn’t work with Web sockets as easily.

- New approach that vendors will have to grapple with.

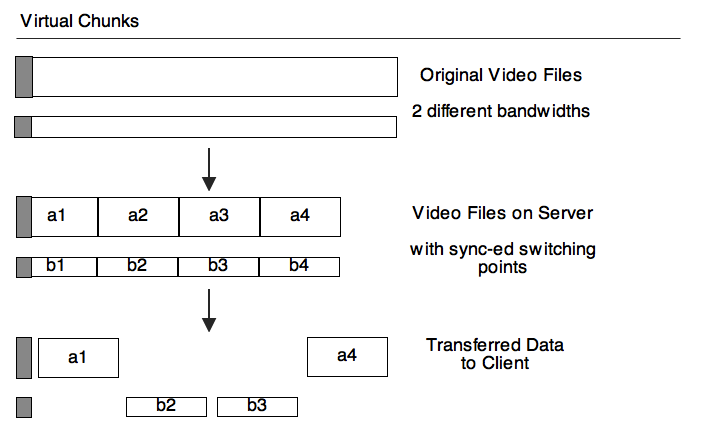

Virtual Chunks

Since in Chained Chunks we are already doing byte-range requests, it is a short step towards simply dropping the repeating headers and just downloading them once at the beginning for all possible bitrate files. Then, as we seek to different positions in “the” file, the byte range of the bitrate version that makes sense to retrieve at that stage would be requested. This could even be done with media fragment URIs, through addressing with time ranges is less accurate than explicit byte ranges.

In contrast to the previous two options, this basically requires keeping n different encoding pipelines alive - one for every bitrate version. Then, the byte ranges of the chunks will be interpreted by the appropriate pipeline. The manifest now points to keyframes as switch-over points.

Advantage over Chained Chunking:

- No header overhead.

- No continuous re-initialisation of decoding pipelines.

Disadvantages over Chained Chunking:

- Multiple decoding pipelines need to be maintained and byte ranges managed for each.

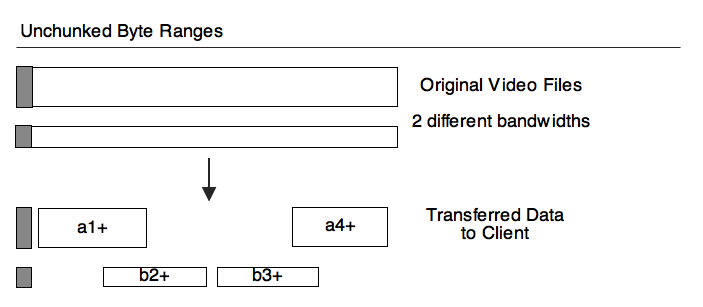

Unchunked Byte Ranges

We can even consider going all the way and not preparing the alternative bitrate resources for switching, i.e. not making sure that the keyframes align. This will then require the player to do the switching itself, determine when the next keyframe comes up in its current stream then seek to that position in the next stream, always making sure to go back to the last keyframe before that position and discard all data until it arrives at the same offset.

Disadvantages:

- There will be an overlap in the timeline for download, which has to be managed from the buffering and alignment POV.

- Overlap poses a challenge of downloading more data than necessary at exactly the time where one doesn’t have bandwidth to spare.

- Requires seeking.

- Messy.

Advantages:

- No special authoring of resources on the server is needed.

- Requires a very simple manifest file only with a list of alternative bitrate files.

Final concerns

At FOMS we weren’t able to make a final decision on how to achieve adaptive HTTP streaming for open codecs. Most agreed that moving forward with the first case would be the right thing to do, but the sheer number of files that can create is daunting and it would be nice to avoid that for users.

Other goals are to make it work in stand-alone players, which means they will need to support loading the manifest file. And finally we want to enable experimentation in the browser through JavaScript implementation, which means there needs to be an interface to provide the quality of decoding to JavaScript. Fortunately, a proposal for such a statistics API already exists. The number of received frames, the number of dropped frames, and the size of the video are the most important statistics required.