In recent months, people in the W3C HTML5 Accessibility Task Force developed two proposals for introducing caption, subtitle, and more generally time-aligned text support into HTML5 audio and video.

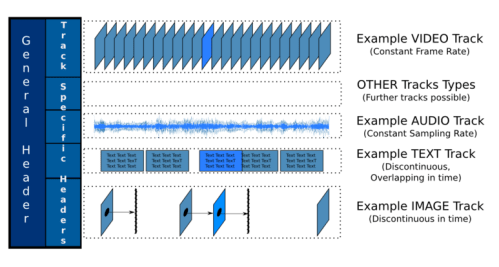

These time-aligned text files can either come as external files that are associated with the timeline of the media resource, or they come as part of the media resource in a binary track.

For both cases we now have proposals to extend the HTML5 specification.

Firstly, let’s look at time-aligned text in external files. The change proposal introduces markup to associate such external files as a kind of “virtual track” with a media resource. Here is an example:

<video src="video.ogv">

<track src="video_cc.ttml" type="application/ttaf+xml" language="en" role="caption"></track>

<track src="video_tad.srt" type="text/srt" language="en" role="textaudesc"></track>

<trackgroup role="subtitle">

<track src="video_sub_en.srt" type="text/srt; charset='Windows-1252'" language="en"></track>

<track src="video_sub_de.srt" type="text/srt; charset='ISO-8859-1'" language="de"></track>

<track src="video_sub_ja.srt" type="text/srt; charset='EUC-JP'" language="ja"></track>

</trackgroup>

</video>

The video resource is “video.ogv”. Associated with it are five timed text resources.

The first one is written in TTML (which is the new name for DFXP), is a caption track and in English. TTML is particularly useful when you want to provide more than just an unformatted piece of text to the viewers. Hearing-impaired users appreciate any visual help they can be provided with to absorb the caption text more quickly. This includes colour coding of speakers, positioning of text close to the speaking person on screen, or even animated musical notes to signify music. Thus, a format like TTML that allows for formatting and positioning information is an appropriate format to specify captions.

All other timed text resources are provided in SRT format, which is a simpler format that TTML with only plain text in the text cues.

The second text track is a textual audio description track. A textual audio description is in fact targeted at the vision-impaired and contains text that is expected to be read out by a screen reader or routed to a braille device. Thus, as the video plays, a vision-impaired user receives additional information about the visual content of the scene through their screen reader or braille device. The SRT format is particularly useful for providing textual audio descriptions since it only provides plain text, which can easily be handed on to assistive technology. When authoring such textual audio descriptions, it is very important to pick time intervals in the original media resource where no other significant audio cue is provided, such that the vision-impaired user is able to listen to the screen reader during that time.

The last three text tracks are subtitle tracks. They are grouped into a trackgroup element, which is not strictly necessary, but enables the author to say that these tracks are supposed to be alternatives. Thus, a Web Browser can create a menu with all the available tracks and put the tracks in the trackgroup into a menu of their own where only one option is selectable (similar to how radiobuttons work). Incidentally, the trackgroup element also allows to avoid having to repeat the role attribute in all the containing tracks. It is expected that these menus will be added to the default media controls and will thus be visible if the media element has a controls attribute.

With the role, type and language attributes, it is easy for a Web Browser to understand what the different tracks have to offer. A Web Browser can even decide to offer new functionality that is helpful to certain user groups. For example, an addition to a Web Browser’s default settings could be to allow users to instruct a Web Browser to always turn on captions or subtitles if they are available in the user’s main language. Or to always turn on textual audio descriptions. In this way, a user can customise their default experience of a media resource over and on top of what a Web page author decides to expose.

Incidentally, the choice of “track” as a name for relating external text resources to a media element has a deeper meaning. It is easily possible in future to extend “track” elements to not just point to dependent text resources, but also to dependent audio or video resources. For example, an actual audio description that is a recording of a human voice rather than a rendered text description could be association in the same way. Right now, such an implementation is not envisaged by the Browser vendors, but it will be something to work towards in the future.

Now, with such functionality available, there is naturally a desire to be able to control activation or de-activation of text tracks through JavaScript, not just through user interaction. A Web Developer may for example want to override the default controls provided by a Web Browser and run their own JavaScript-based controls, thus requiring to create their own selection menu for the tracks.

This is actually also an issue more generally and applies to all track types, including such tracks that come inside an existing media resource. In the current specification such tracks are not exposed and can therefore not be activated.

This is where the second specification that the W3C Accessibility Task Force has worked towards comes in: the media multitrack JavaScript API.

This specification introduces a read-only JavaScript interface to the audio and video elements to allow Web Developers to find out about the tracks (including the virtual tracks) that a media resource offers. The only action that the interface currently provides is to enable or disable tracks.

Here is an example use to turn on a french subtitle track:

if (video.tracks[2].role == "subtitle" && video.tracks[2].language == "fr") video.tracks[2].enabled = true;

There is still a need to introduce a means to actually expose the text cues as they relate to the currentTime of the media resource. This has not yet been specified in the given proposals.

The text cues could be exposed in several ways. They could be exposed through introducing an event, i.e. every time a new text cue becomes active, a callback is called which is given the active text cue (if such a callback had been registered previously). Another option is to simply write the text cues into a specified div-element in the DOM and thus expose them directly in the Browser. A third idea could be to expose the text cues in an iframe-like element to avoid any cross-site security issues. And a fourth idea that we have discussed is to expose the text cues in an attribute of the track.

All of this obviously also relates to how to actually render the text cues and whether to render them in a shadow DOM so as to make the JavaScript reading separate from the rendering and address security and copyright issues. I’d be curious in opinions here on how it should be done.